In recent years, the self-driving car industry has made a huge leap toward a driverless future with Israeli startups such as Mobileye, Innoviz, and Cognata paving the way. Today, unmanned vehicles can operate smoothly in any weather, navigate in any city, and park like a pro.

Still, this huge leap for industry seems a mere baby-step for humanity: most consumers state that they would prefer a regular car to even the most sophisticated autonomous vehicle. The reason is clear - people don't believe they are safe enough.

Long-rumored news about accidents involving autonomous driving are only pouring gas to the fire, nurturing the distrust of prospective users. While the real numbers, which indicate how rarely such accidents actually occur compared to those caused by human error, often go unnoticed.

To make the driverless dream come true, manufacturers and software development companies need to make the technology not just safer, but legendarily safe.

In this article, we have collected the features that are fundamental for pilotless transportation security.

Sensor Fusion

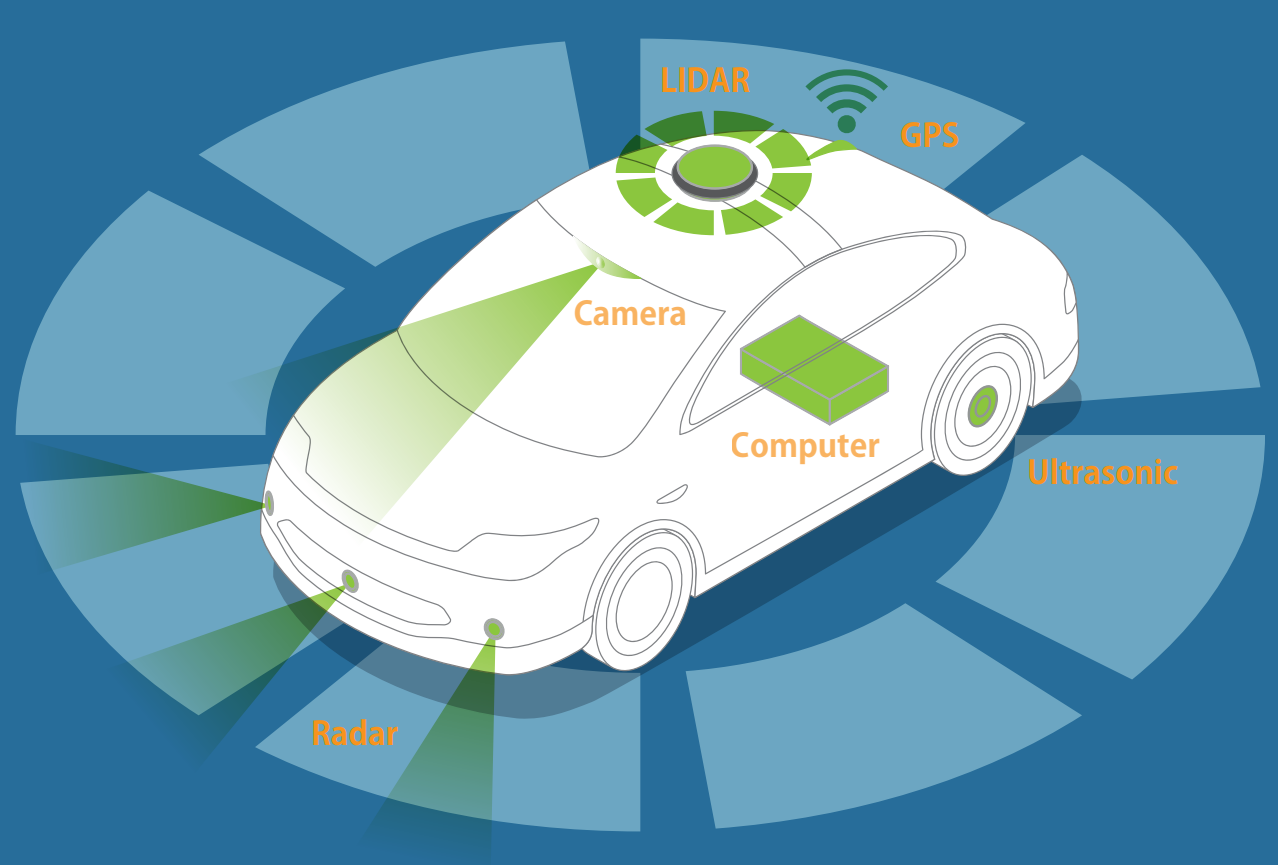

Many cars that are on the streets now - and even more in R&D labs - feature some form of advanced driver assistance system (ADAS) based on sensor bundles that combine cameras, radar, ultrasound, or lidar. These are all indispensable parts of self-driving technology – common features such as emergency braking, cruise control, or automatic parking could not exist without them.

In other words, they are the vehicle’s eyes and ears. Like human sense organs, each specific sensor is tailored to perceive a defined data type and has its pros and cons. However, you can’t simply overcome the shortcomings of any one sensor type by using it multiple times. Instead, you’ll need a synergetic combination of data coming from different sources to derive the most accurate picture of the world around the metal box.

Here is a sneak peek at the equipment you need to make a car “see”:

Surround View Cameras

The classical design consists of 4 cameras: front, rear and two on the outside rearview mirrors. The incorporation of these cameras establishes previously unobtainable viewpoints from the car which is quite useful when changing lanes or during parallel parking.

Not only can it monitor the area around the vehicle, it can also identify pedestrians, lane markings, and road signs. However, being a combination of several 2D images, the camera view is unsuitable for measuring speed and the remoteness of surrounding objects. It is also less effective in heavy rain or fog.

Radar

Radar is utilized to estimate distances and velocities. On the downside, its signatures are pretty sparse. It can tell you that an object 30 meters ahead is moving at a speed of 45 mph. But it won’t tell you if it is a car, a motorbike, or if Usain Bolt is back on track.

Lidar

Lidar is the golden mean between camera and radar. It builds a 3D point cloud by emitting small portions of light and measuring the time it takes them to bounce back and return to the source.

The lidar has much better resolution than the radar, while still being able to determine distance and speed.

Interpreting the data received by lidar requires considerable computer power, making lidars prohibitively expensive for most the people.

Ultrasonic

The operative principle behind ultrasonic sensors operation is similar to that of lidars, but it is based on sound waves instead of light waves.

Compared to lidar, ultrasonic sensors are extremely cost-effective. Their average sensing distance, however, rarely exceeds 10m, making them inappropriate for forward motion, although they are still quite helpful when parking and for lateral movement.

Data fusion

If a radar tells us that the car ahead moves at a speed of 50 mph, while the lidar shows only 47 mph, we can determine the actual speed by merging these estimates with data from the recent past.

One algorithm behind this fusion is called a Kalman filter. The principle is rather simple: the filter collects sensor data, updates the calculations, then collects more data, etc. This way it estimates what the world "most probably" looks like.

Here’s a vivid example of the way a Kalman filter works:

Red – radar data, blue - lidar data, green – the car’s position after applying the Kalman filter.

Emergency braking system

If you have ever tried to catch a troublesome fly with your bare hands, you would agree that human reaction is far from ideal. This is not a detrimental deficit in the pursuit of fly-catching. But in cases of driving in poor visibility, or when pedestrians cross the street in undesignated areas, insufficient human reaction may take a real, human toll.

The Automatic Emergency Braking system is a core element underlying the safety of both autonomous and piloted driving.

The Automatic Emergency Braking system is a core element underlying the safety of both autonomous and piloted driving.

Depending on the level of automation, there are three major types of AEB systems:

1) Collision warning system that recognizes threats and notifies the driver.

2) Dynamic brake support that enhances the driver’s effort if he taps the brakes too lightly to evade the accident.

3) Crash imminent braking system that activates the brakes to slow down or stop the car if the driver does nothing to avoid the collision.

According to where and how you intend to use it, AEB comes in three categories:

- Low-speed system – designed for city traffic and identifies potentially hazardous objects to prevent collisions and thus minor injuries such as whiplash;

- Higher speed system – scans up to 200 meters ahead by applying long-range radar;

- Pedestrian system – specializes in distinguishing and predicting pedestrian movement towards the path of travel.

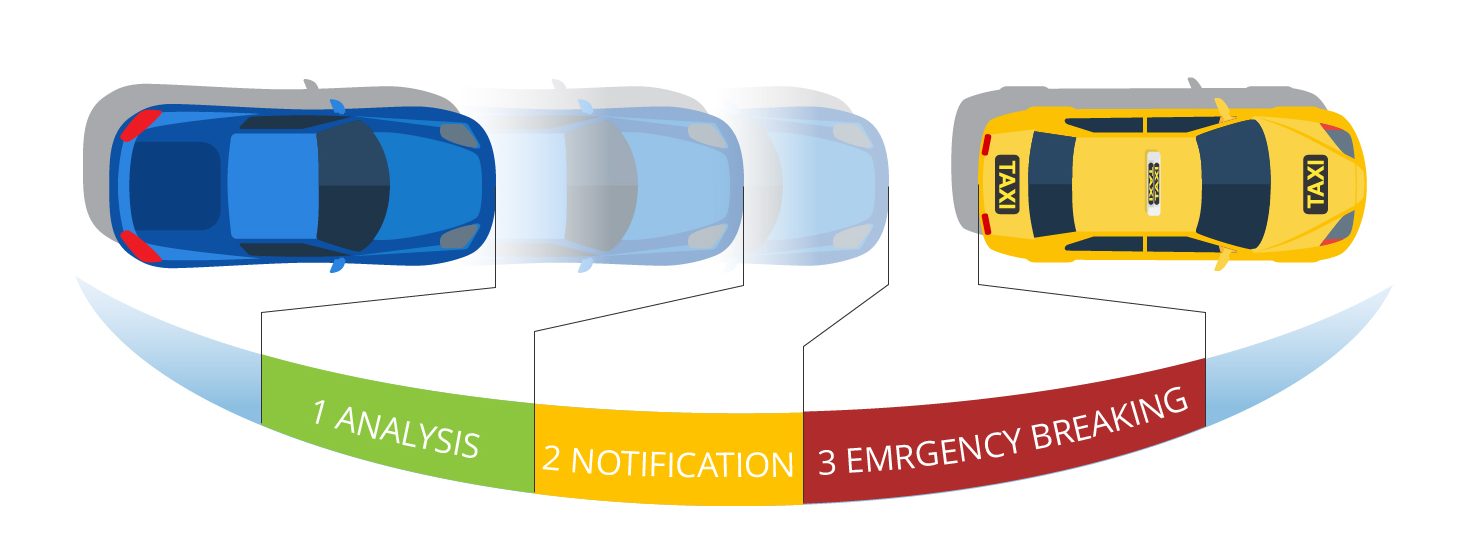

In general, the process of emergency braking is divided into several stages:

1) The sensors scan the road for potential threats. Received data is combined with information about the vehicle’s own speed and trajectory to determine if there is a collision hazard.

2) If a potential threat is detected, the system alerts the driver.

3) If no action is taken and a crash is imminent, the system applies the brakes.

The architecture of AEB systems varies from manufacturer to manufacturer: some only warn the driver about the necessity to take action, while others activate automatically. Either way, the intent is to rely on an emergency response from a machine with the ability to react immediately.

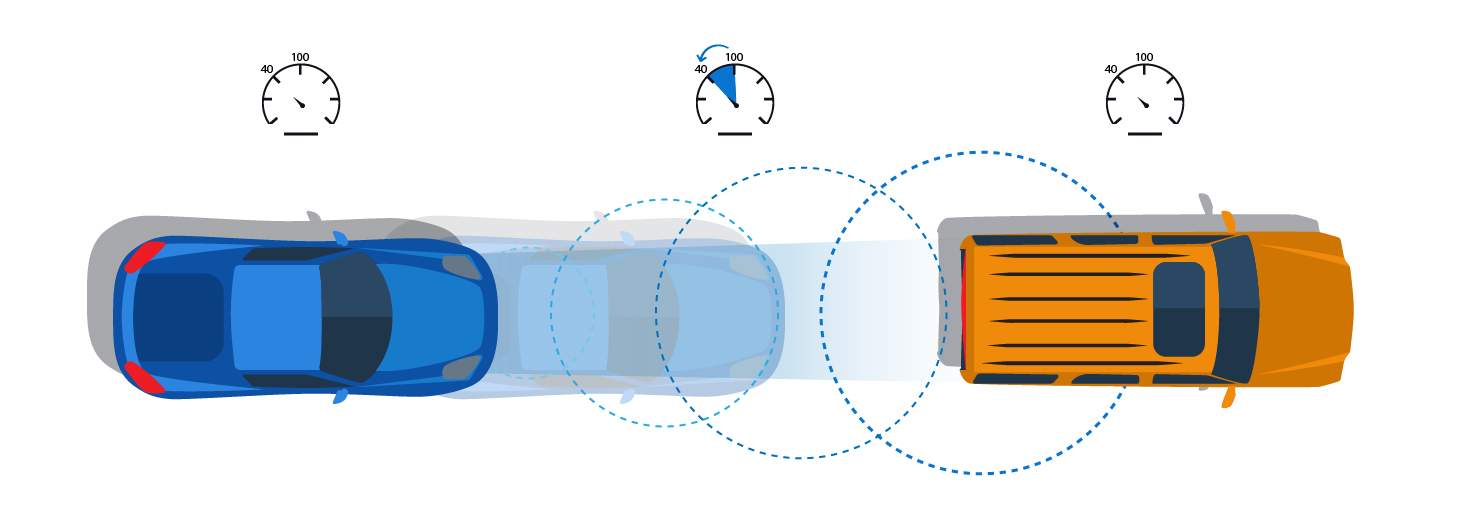

Adaptive cruise control

ACC is a complex system used to manage the car's own velocity in order to keep pace with other road users. It also enables the driver to set the speed limit and preferences regarding in-lane driving. When activated, the ACC system keeps the car 2 - 4 seconds behind objects ahead.

Here's how it works:

- The cruise control is set at 70 mph

- Radar detects a slower vehicle ahead and reduces speed to keep the vehicle at a preset following distance

- Cruise control adjusts to the speed of the lead vehicle and resets to original speed if traffic clears

ACC often comes with an emergency braking system to react efficiently if surrounding objects change their trajectory unexpectedly.

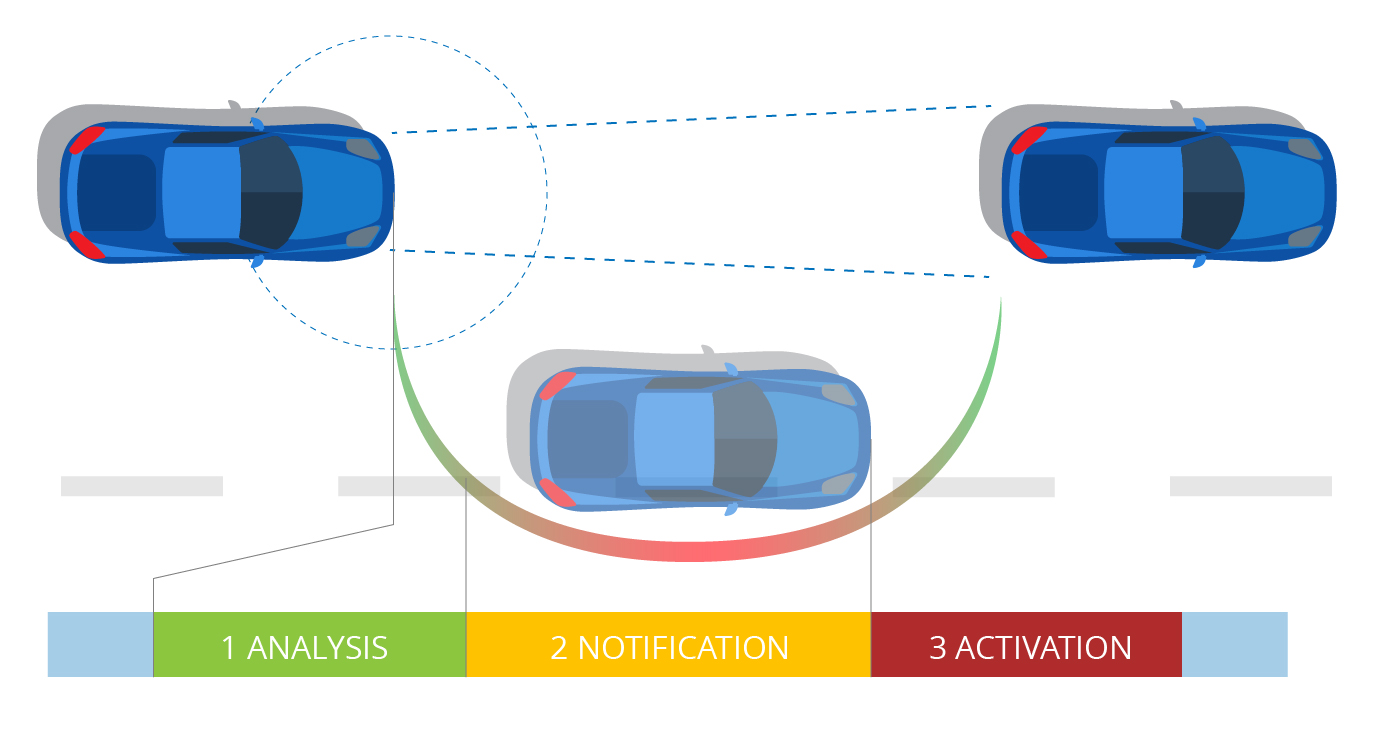

Active lane assist

The ALA system identifies lane markings and the car’s position relative to them. If it detects that the vehicle is about to leave the lane, it notifies the driver visually, audibly or via steering wheel vibration. If the driver ignores the alert, the system will direct the car back into the lane.

- Vehicle in lane

- The driver is alerted if the turn signal is not used

- The system gently steers the vehicle back into the lane if the driver doesn’t respond

The system deactivates if it assumes you are shifting lanes deliberately (e.g. when indicating). The most recent versions have embedded AI that can detect if the pilot is keeping the road situation under control.

Vehicle-to-vehicle communication

V2V communication is intended to improve the intelligent transport system by empowering inter-car data sharing via an ad-hoc mesh network.

While basic V2V communication capacity is already integrated into some models, mass implementation is still beyond the horizon. There are three major sticking points for the industry preventing its further improvement: the need for manufacturers to develop mutually compatible standards, privacy, and funding. As of yet, it is unclear whether maintenance of this communication infrastructure will be publicly or privately funded.

***

Safety concerns still remain the most influential factor undermining the mass adoption of self-driving technology. Even so, the massive demand for advanced security has fueled the development of features already implemented by leading manufacturers. Emergency braking, cruise control, and lane assist have already prevented countless accidents and saved thousands of lives. Refining these features may become a key factor in rebuilding customer trust.

At Intersog, we always stand ready to give you a hand in building safer and more effective transportation. Feel free to contact us to learn more about our IoT solutions.

Leave a Comment