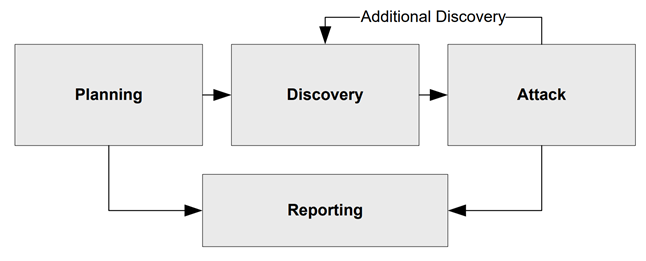

Penetration testing is a targeted, authorized security review, not just running automated scanners or a free-for-all hackathon. It’s a structured, goal-driven assessment that identifies real exploitable weaknesses. As industry experts emphasize, a pen test “should not be confrontational…[but] identifying the business risk associated with an attack”. Unlike a routine vulnerability scan, which merely lists potential issues, a penetration test combines human expertise and safe exploit attempts to validate how “weaknesses…can be exploited”. In practice this means experienced testers mimic attackers within strict legal scope, working with the client to prove how far a breach could go – not just cataloging flaws.

Scoping and Pre-Engagement: Planning the Test

Modern penetration testing begins long before any scanning tool is used. First comes the pre-engagement stage, during which testers and the client agree on the scope, rules and expectations in detail. A clear scope defines exactly which systems, applications, or networks are included (with everything else being explicitly excluded) and establishes the rules of engagement, such as which tests are permitted, the times during which testing is allowed, the communication channels to be used, and the incident escalation paths. Security consultants emphasise that defining scope is one of the most important components of a test. Failure to do so can lead to scope creep or legal issues. For example, testers confirm precisely which IP ranges or domains are authorised, since scanning unknown assets can be illegal. They also verify who owns those assets (e.g. whether servers are hosted by a third party) and note any jurisdictional issues (e.g. whether the target is hosted in the EU, which might require special privacy considerations).

In practice, this planning involves drawing up contracts and NDAs, scheduling the testing period (often outside normal working hours or within a designated timeframe) and deciding who to contact in the event of an incident. The agreement specifies exclusions (e.g. live databases or critical systems that should not be probed) and success criteria (e.g. identifying any critical vulnerabilities versus achieving a specific coverage goal). This phase may involve external cybersecurity experts or dedicated security consulting teams – many organisations find it makes sense to engage specialist cybersecurity services rather than risking amateur testing. Proper authorisation is formalised in writing so that the testers 'have the authority to conduct defined activities' without fear of legal repercussions. In short, a modern penetration test is a carefully scoped exercise with clear legal backing, not an open-ended attack drill.

Reconnaissance: Mapping the Attack Surface

Once authorized, testers move into reconnaissance and surface mapping. Here the goal is to know the target. Using a mix of automated tools and manual research, testers map network ranges, domain names, and service endpoints. They perform DNS and WHOIS lookups to list hosts, and may sniff the local network if inside. Even public records and social media are fair game: for example, corporate websites or LinkedIn profiles can reveal usernames and system details. As NIST notes, pentesters gather hostnames and IPs via DNS or InterNIC queries, enumerate employees from web directories, and use tools like NetBIOS or SNMP (on internal tests) to discover system names and shares. They also do banner-grabbing (connecting to open ports) to record software versions, and sometimes even low-tech stuff like “dumpster diving” or walking the premises to find printed passwords.

All this information feeds a map of possible entry points. Automated vulnerability scanners are often run next, comparing discovered systems against known-flaw databases (like the NIST NVD). But scanners alone won’t get the job done: they may produce hundreds of alerts, many of which are false positives or irrelevant. Therefore testers manually review and validate each finding. NIST emphasizes that during analysis the primary goals are to “identify false positives, categorize vulnerabilities, and determine their causes”. In practice, this means a tester takes a scanner alert and manually probes the candidate vulnerability to see if it truly can be exploited. Only verified weaknesses move on to the next stage. This blend of automated and manual methods ensures that testers focus on real risks, not ghost issues.

Threat Modeling and Prioritization: Focusing on What Matters

With a list of potential vulnerabilities in hand, testers apply a simple truth: not all flaws are equally important. They build a mental threat model of the system, asking “Which assets or functions would an attacker really want to abuse?” Rather than chasing every low-severity finding, they prioritize based on impact and exploitability. Key targets usually include authentication and access controls (logins, password reset), sensitive data (customer info, secrets), and high-privilege functions (admin workflows). Business logic flaws (for example, checkout processes or transaction limits) also get close attention. PTES (the Penetration Testing Execution Standard) describes threat modeling as focusing on “business assets, business processes, threat communities, and their capabilities”. In other words, testers concentrate on the crown jewels (the data or functions an adversary would value) and the likely tactics an attacker would use.

Modern pentesters often use frameworks like OWASP’s Application Security Verification Standard (ASVS) or the OWASP API Top 10 as informal guides. These lists remind them to check for injection bugs, broken authentication, encryption weaknesses, and so on. But the focus is always on realistic scenarios. For instance, if an application has a multi-tenant design, testers examine “blast radius” issues: can an attacker on one tenant’s account affect another tenant? Cloud testers will look for misconfigurations in access roles. Mobile app testers consider insecure local storage or weak API calls. The ultimate aim is to explore the paths that matter most to the business, as an attacker would.

Check out a related article:

What Causes 95% of Cybersecurity Breaches - And How to Prevent Them

Vulnerability Discovery and Validation: From Finding to Proof

At this stage, testers actively probe each prioritized area using both tools and custom tests. They might run dynamic application scanners, fuzz web inputs, or enumerate APIs with a proxy. Crucially, they don’t stop at a mere alert. Every suspected vulnerability is manually verified. For example, if an automated scan flags a possible SQL injection point, the tester will craft a specific query to see if data can actually be extracted. If a configuration scan shows an open directory, the tester will try to list its contents. This distinction – finding potential issues vs proving them – is vital. As NIST notes, an exploit attempt “verifies potential vulnerabilities by attempting to exploit them” so that “if an attack is successful, the vulnerability is verified”. In other words, a flaw is only reported if there is evidence it can be abused in the target environment.

Throughout this process, testers rely on their security expertise. They draw on OWASP WSTG techniques for web apps, OWASP ASVS for general application security, OWASP API Top 10 for APIs, and OWASP MASTG/MASVS for mobile apps. They use credentialed scans (authenticated testing) where allowed to get deeper visibility, and complement automated tool output with manual insight. False positives are weeded out by trying exploits or comparing tool results. As NIST points out, manual examination “typically provides more accurate results than comparing results from multiple tools”. The outcome is a vetted list of real security issues, each prioritized by severity and impact.

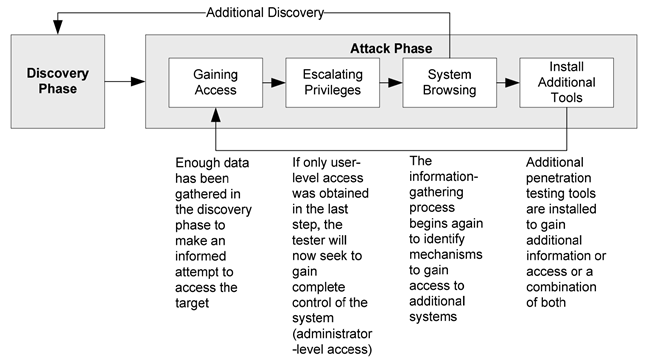

Controlled Exploitation: Confirming the Risk

Once a vulnerability has been confirmed, testers may attempt a controlled exploit in order to understand its potential impact. The aim is not to cause chaos, but to demonstrate potential consequences safely. For instance, a tester might inject a harmless payload into a form field to ascertain whether a database record can be read or modified. Alternatively, if a weakness in the admin login is found, they might use a proof-of-concept exploit to gain temporary access to a development or locked-down copy of the system and then stop immediately. This proves the severity of the issue, showing exactly what data could be accessed, which systems could be compromised and how far an attacker could penetrate, without actually causing any damage.

Controlled exploitation may also involve privilege escalation and lateral movement, if permitted by the contract. During internal tests, once a foothold has been gained, testers will attempt to escalate their permissions (for example, from regular user to system administrator). They may also attempt to move to other machines or services on the network. All such actions are carried out cautiously: testers follow fail-safe procedures so that, if something goes wrong (e.g. a server crashes), they can stop immediately. Crucially, everything is documented in real time (screenshots, logs and notes) to serve as evidence. This high-level exploitation is only carried out to confirm exploitability; the detailed 'how-to' steps of the attack remain confidential to protect against misuse. In summary, controlled exploitation in a penetration test is a carefully monitored demonstration of risk, not an all-out attack.

Post-Exploitation and Business Impact

After attempted exploits, testers analyze the fallout to gauge business impact. They assess how much control an attacker would have obtained: could they read sensitive user data, plant malicious software, or pivot to other critical systems? They also check for ways to maintain access (persistence) or create new backdoors if that was in scope. Equally important, testers note what defenses or logs were triggered (or not) during the test, highlighting any detection gaps. For example, if an exploit runs without alerting the security systems, that’s a finding too. (Often testers run in “covert” mode to simulate an attacker avoiding detection, but with prior permission.)

Ultimately, this post-exploitation analysis paints a picture of the true threat. It shows management the “blast radius” of a breach – e.g. that a stolen password could lead to full network control – and identifies any exposed trust boundaries. It also yields valuable context for prioritizing fixes. For instance, a medium-risk bug might jump to high priority if it allowed admin takeover. By describing what an attacker could do businesswise, testers help translate technical findings into tangible risk. The focus is always on constructive insight: which combinations of vulnerabilities lead to the worst outcomes, and how to shut down those avenues safely.

Delivering the Pentest Report

All findings are compiled into a clear, actionable report for multiple audiences. Modern penetration test reports usually include an executive summary (a non-technical overview of key risks and impacts) and one or more technical sections containing detailed vulnerability descriptions for IT teams. At a minimum, the report 'identifies vulnerabilities and their recommended mitigation actions'. In practice, the report will include the following for each issue: a description of the flaw; where it was found (asset or URL); how it was detected; and proof-of-concept evidence (e.g. screenshots or logs). Each finding is given a severity or risk rating (often tied to standards such as CVSS). The report also explains the business impact, for example, 'an attacker could access customer data or disable a service', so that executives understand the implications.

Importantly, the penetration test report is made available to all relevant stakeholders, such as the CIO, CISO and system owners. It may be delivered in multiple formats, such as a brief slide deck or letter for management and a detailed PDF for engineers. NIST specifically notes that, since pentest results have diverse audiences, 'multiple report formats may be required' to address each group. In practical terms, the report should also include remediation guidance (suggested fixes or workarounds) and advice on retesting. Many reports conclude with an overall risk summary and recommended next steps. Thus, a high-quality report empowers leadership to make informed decisions and enables developers to apply the right patches.

Remediation and Retesting

A penetration test is only valuable if its findings are acted upon. Once the report has been delivered, the development and operations teams work together to resolve or mitigate the issues that have been identified. This may involve patching software, tightening configurations or rewriting insecure code. Best practice is to formally track these fixes, often via a Plan of Actions & Milestones (POA&M). NIST notes that, once the fixes have been implemented, organisations should conduct audits and retests to confirm that the problem has actually been solved. In other words, testers (or internal QA/security teams) repeat the specific tests in a 'mirror' setup to validate that the vulnerabilities have been eliminated. This retesting step verifies that the mitigation actions were completed correctly.

To make this process more efficient, many teams integrate security issues into their regular QA and development workflows. For instance, fixes can be added to the backlog and tested during the subsequent sprint or release cycle. Security-conscious organisations may even have specialised QA and testing services that incorporate pen-testing checks into their processes. Linking the penetration test back into the ticketing and test-tracking system helps to ensure that no issues slip through the net. In short, remediation is not an afterthought – it’s part of the security lifecycle. By systematically addressing high-risk issues and re-verifying them, teams can enhance their resilience. It's worth noting that neither a scan nor a single penetration test can 'prove' complete security, but responding to real-world test results is how organisations grow stronger.

Testing Different Environments

The core pentest process is similar across contexts, but the specifics change with the environment under test:

- Web application pentests focus on browser-accessible interfaces. Testers follow OWASP WSTG guides to check for issues like SQL/NoSQL injection, cross-site scripting, CSRF, broken access control, insecure session management, etc. They may test both authenticated and unauthenticated functionality. A tester might crawl the site (often using an automated scanner) and then manually probe complex areas like multi-step forms or payment flows.

- API pentests (e.g. REST/GraphQL services) skip the browser and target backend endpoints directly. Here the focus is on JSON/XML inputs, authentication tokens, and API rate limits. Testers look for API-specific risks: insufficient authorization (e.g. changing a parameter to access another user’s data), injection in API calls, excessive data exposure in JSON responses, and abuse of actions (like IDOR or business-logic flaws). OWASP’s API Security Top 10 is a useful reference for common pitfalls (broken user auth, lack of encryption, improper assets exposure, etc.). Testing might involve automated tools that send crafted API requests, as well as manual checks of each endpoint’s logic.

- Cloud environment pentests examine cloud platforms (AWS, Azure, GCP) under the shared-responsibility model. Testers focus on cloud-managed assets that the customer controls: virtual machines, containers, IAM roles, storage buckets and so on. They assess misconfigurations (e.g. public S3 buckets, overly permissive IAM policies), insecure networking rules, and exposed cloud metadata services. The methodology differs from on-premises tests – for example, there’s usually no firewall to bypass on the perimeter, but there are cloud APIs and CLI tools to exploit misconfig. Testers must also respect cloud provider pentest policies (e.g. AWS has guidelines on what actions trigger abuse alerts). In sum, a cloud pentest looks at the customer’s cloud setup and applications, ensuring “your environment, your rules” are followed.

- Mobile app pentests involve testing both the device app and its backend. Testers often start with static analysis of the app binary (looking for hard-coded secrets, insecure crypto, or permission issues), using OWASP MASVS/MSTG as a guide. They then run the app (on emulator or device) to observe network traffic, testing APIs and local data storage. Issues like insecure storage of tokens, weak SSL pinning, or insecure inter-app communication are common in this domain. Mobile tests blend code review, dynamic probing, and even reverse-engineering when needed, always respecting platform rules (e.g. jailbreaking is typically allowed with permission).

- Internal vs. External testing changes the tester’s starting point. In an external pentest the tester sits outside the network with only public info (domain names or IPs). They simulate an outsider attacker, using Internet-facing scans and OSINT. In an internal pentest, the tester starts inside the corporate network (often with a low-privilege user account). They can use internal tools like network sniffing and directory queries. NIST notes that internal tests “take place behind perimeter defenses” and may use techniques like sniffing that aren’t available externally. If both are done, it’s common to run the external test first to avoid giving the tester an unfair head start on internal knowledge. Both scenarios are valid: external tests check the internet-facing perimeter, while internal tests simulate a breach or malicious insider.

No matter the environment, the pentesting methodology remains disciplined: scope, recon, exploit, report, and retest. Testing approaches differ (e.g. web flows vs. API calls), but the goal is always the same: find and prove real security gaps so they can be fixed.

Conclusion

A mature security strategy acknowledges that no test can guarantee absolute security. Penetration testing does not 'prove security'; it measures exploitability. By following a structured process involving proper scoping, thorough reconnaissance, focused threat modelling, careful validation and controlled exploitation, penetration tests reveal how an attacker could break in. The ultimate value lies not in the test itself, but in what you do afterwards: addressing issues, strengthening defences and incorporating security awareness into development. Ultimately, a good penetration test provides organisations with a clear view of their real-world risks, enabling them to improve. It’s a practical, business-driven exercise that complements other QA and security practices, helping teams build more resilient systems (see Intersog’s cybersecurity services and consultancy, for example) and fold security into their normal development and testing lifecycles (see Intersog’s QA and testing services).

Leave a Comment