Artificial intelligence has evolved from a research novelty to become a core component of modern products. Today’s generative AI applications, from chatbots to recommendation engines, rely on a variety of models, tools, and infrastructure. For CTOs, product leaders and founders, choosing the right technology stack is no longer an afterthought; it’s a strategic decision that determines whether your prototype becomes a scalable enterprise AI solution or an expensive experiment.

This guide offers a framework to help you weigh up the options. We will explore how to incorporate domain knowledge using retrieval-augmented generation (RAG) or fine-tuning, when to use open-source versus proprietary large language models (LLMs), how to select a vector database and the requirements for serving models in production. We will examine cost models from prototype to scale, highlight security and compliance pitfalls, and many other aspects of AI development.

We’ll be updating this guide step-by-step. So stay tuned!

RAG vs. Fine‑Tuning vs. Hybrid: Choosing Your Model Strategy

Before diving into comparisons, let’s define each approach and outline how they work in a real system. This primer focuses on practical architecture elements – how data is used, infrastructure components, and maintainability implications – rather than deep theory.

Retrieval-Augmented Generation (RAG)

RAG is an AI integration method in business where an LLM is augmented with an external knowledge base to ground its answers in specific data. RAG pipelines retrieve relevant information at query time and inject it into the LLM’s prompt (input context), rather than relying solely on the model’s fixed training data. The LLM then uses both its general knowledge and the retrieved data to generate a response. Essentially, RAG connects your AI model to your proprietary information sources in real time.

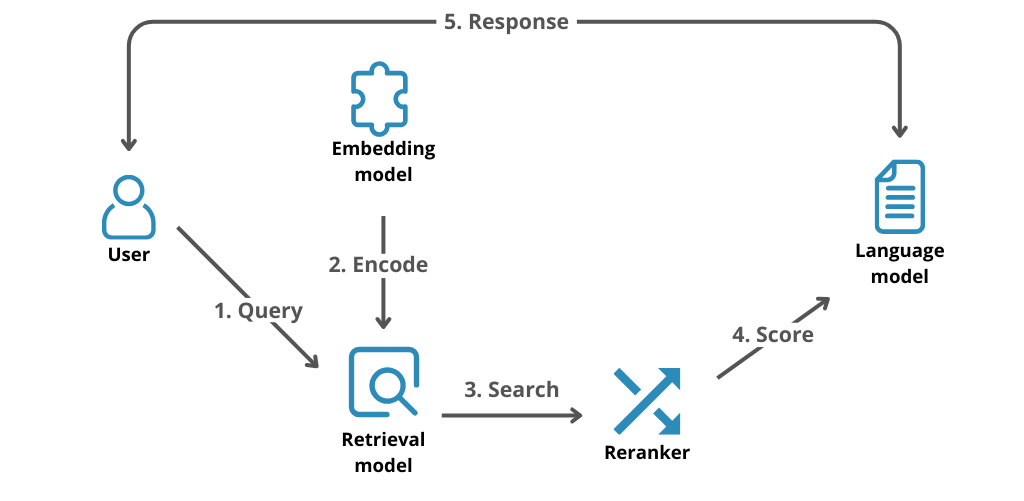

A typical RAG architecture involves a vector database or document index that stores the organisation's texts (such as documents, wikis and manuals) in embedding form for semantic searches. When a user submits a query, the system identifies the most relevant documents using embedding similarity and incorporates these documents (or snippets) alongside the original query into the LLM’s prompt. The model’s answer is thereby 'augmented' with up-to-date, context-specific information. This requires additional infrastructure, as you need pipelines to ingest and chunk documents, embed them in vector space and update the index as knowledge evolves. However, RAG avoids altering the LLM’s internal weights, with all customisation happening via the data pipeline and prompt.

In practice, RAG is highly effective in question-and-answer and assistant applications that require access to up-to-date or proprietary information. For instance, a customer support chatbot can use RAG to retrieve answers from the most recent product documentation or an internal wiki, thereby ensuring accuracy and timeliness. As the model cites the actual text retrieved, RAG can also reduce hallucinations and enable source attribution in responses (e.g. by showing document links). However, implementing RAG requires investment in data engineering, including preparing a high-quality knowledge base, keeping it updated and tuning the retrieval method for your domain. If no curated data repository exists yet, creating one can be a significant project in itself. We’ll discuss these trade-offs in more detail later.

Fine-Tuning

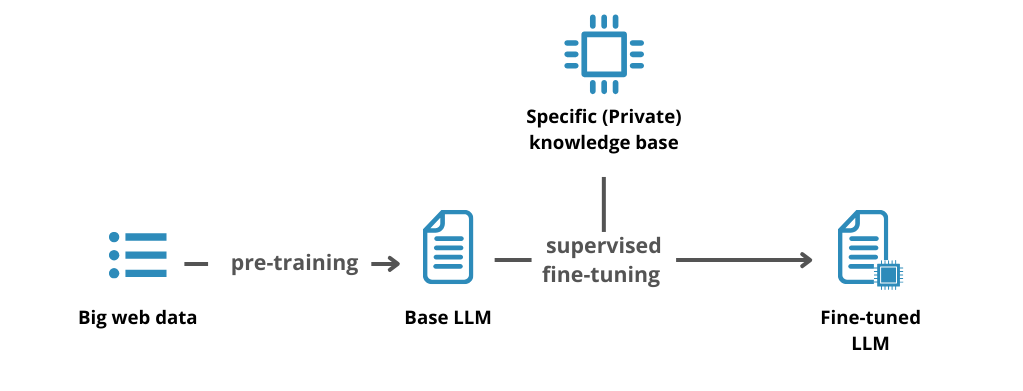

Fine-tuning is a machine learning development process in which a pre-trained foundation model is further trained on domain-specific data to adjust its behaviour and knowledge. In essence, the model's internal parameters (weights) are optimised using your labelled examples or documents, enabling it to "learn" your task or jargon. Rather than relying on external retrieval, fine-tuning embeds your custom knowledge directly into the model itself.

In a fine-tuning workflow, you assemble a relevant dataset for your use case, such as a set of Q&A pairs, documents with desired summaries or transcripts demonstrating the desired style and tone. You then train the LLM on this data via supervised learning, typically using specialised infrastructure such as powerful GPUs or cloud ML services. The model then updates its weights to reduce error on the new examples, thereby incorporating the patterns of your data into its neural network. This can be achieved through a full fine-tune (whereby all model weights are updated) or via parameter-efficient fine-tuning (PEFT) methods such as LoRA adapters, which only require the training of small additional weight matrices. PEFT techniques have become popular as they require far less computing power while still effectively steering the model.

The appeal of fine-tuning lies in its ability to create a specialised model with a deep understanding of your context. It can produce highly accurate outputs that are appropriate to the context, using the correct terminology and style for your field. For example, a fine-tuned medical assistant can think like a doctor, providing diagnoses in the correct clinical language that a generic model might overlook. Fine-tuning can also enable a smaller model to perform as well as a much larger one on a specific task, improving efficiency. One study found that a fine-tuned model 1,400 times smaller than GPT-3 matched its performance on a task. However, fine-tuning has notable downsides: it requires substantial investment in data preparation and training. A large, high-quality dataset is required (often with thousands of examples), as well as expertise in LLM training, to avoid issues such as overfitting. Training can also take hours or days of computing time for large models. Once trained, a fine-tuned model is also static, so if your knowledge or requirements change, you must collect new data and retrain or fine-tune again to update the model’s knowledge.

Hybrid Approach (RAG + Fine-Tuning)

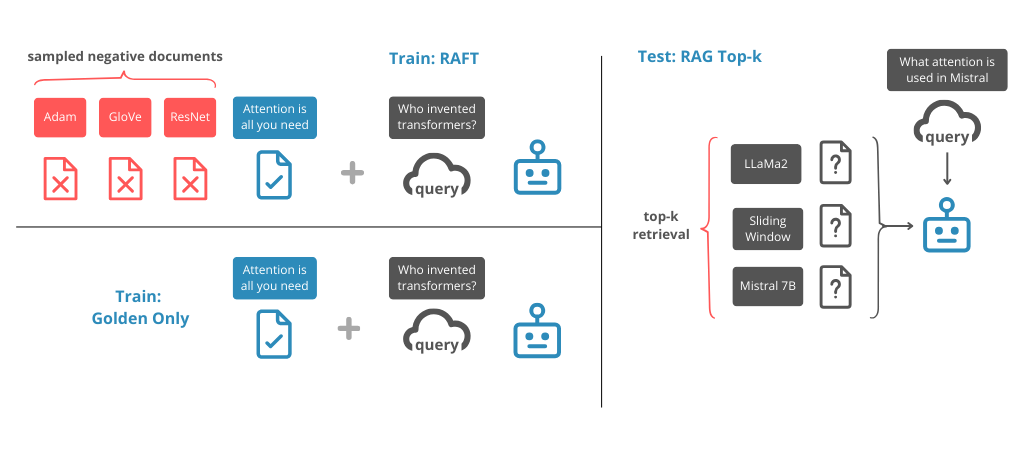

In reality, RAG and fine-tuning are not mutually exclusive. Many advanced enterprise AI solutions combine both techniques to achieve optimal results. With a hybrid architecture, you can fine-tune a model using your core domain data and deploy it alongside a retrieval mechanism for real-time information. This approach is sometimes referred to as 'RAFT' (Retrieval-Augmented Fine-Tuning) in recent literature.

Why do both? Imagine you have proprietary processes or tone that you want the AI to master, which is best achieved by fine-tuning, but you also have a continually changing knowledge set that is best handled by retrieval. A hybrid system would allow you to fine-tune an LLM using your company's product manuals and style guidelines, ensuring it responds with the correct expertise and tone. It would also use RAG to retrieve the latest product updates released after the fine-tuning. One company that needed to keep an AI chatbot updated on monthly product changes used this exact strategy: they retrained the model every couple of months for general alignment and used RAG daily to pull in new product details. Fine-tuning made the model's responses more coherent and tailored, while retrieval ensured that the information was always up to date.

The trade-off, of course, is complexity. A hybrid approach inherits the challenges of both RAG and fine-tuning: you need to perform data engineering on the knowledge base and manage the training of the models. Strong MLOps/LLMOps maturity is required for deployment. However, for well-established use cases, this approach can produce highly accurate, context-aware outputs that neither method could achieve alone. We will explore when this added complexity is warranted in later sections.

Decision Dimensions: RAG vs. Fine-Tuning vs. Hybrid

When deciding among RAG, fine-tuning, or a hybrid approach, it helps to evaluate them across a set of key dimensions. Below we compare the approaches in terms of:

- Knowledge Freshness: How well can the solution incorporate new or updated information?

- Data Labeling & Preparation: What are the data requirements and effort needed (e.g. labeled training data vs. unstructured documents)?

- Latency & Cost: How does each approach impact runtime speed and infrastructure cost, as well as upfront investment?

- Compliance & Traceability: How easy is it to meet data privacy requirements and provide transparent, explainable answers?

- Scalability & Maintenance: Effort to maintain and scale the solution as knowledge grows or as you serve multiple domains.

- Team/Project Maturity: What level of ML expertise, engineering resources, and project maturity is needed to implement each approach successfully?

Let’s break down each of these decision factors and see how RAG, fine-tuning, and hybrid stack up.

Knowledge Freshness

RAG excels at ensuring knowledge is up to date. As it retrieves answers from an external database in real time, an LLM with RAG can leverage information that is as current as the latest update to the knowledge store. If answers need to reflect today's policies or news, RAG can handle this immediately by retrieving the relevant content.

By contrast, a fine-tuned model is limited to the data it encountered during its last training run – its knowledge is 'frozen' at that point in time. Without retraining, a fine-tuned LLM will be unaware of any facts or changes that occurred afterwards. Hybrid systems mitigate this issue by using retrieval to access fresh data, while the fine-tuned model covers foundational knowledge.

In short, RAG (or hybrid) systems have a clear advantage when it comes to keeping answers up to date with rapidly changing information.

Data Labeling & Preparation

Fine-tuning usually requires task-specific, curated, labelled data, which can be a significant undertaking. Organisations must gather large sets of examples (questions and correct answers, desired outputs, etc.), clean and label them, and ensure that they cover enough variation to teach the model. This process can take weeks or months, often requiring domain experts to provide or verify the data.

RAG, on the other hand, requires a corpus of documents or information, but not in a labelled QA format. You can use existing unstructured data, such as web pages, PDFs and knowledge base articles, as they are after some pre-processing, such as chunking and indexing. There is no need for a supervised 'expected output' for each document since the model will generate answers dynamically from them. Therefore, preparing data for RAG is often about the quantity and coverage of your knowledge base, whereas fine-tuning is about the quality and representativeness of your training set.

A hybrid approach requires both: you will need a labelled dataset for fine-tuning and a rich document store for retrieval.

Latency & Cost

The fundamental difference lies in where the 'work' happens.

Fine-tuning involves front-loading the effort as a training cost – computational resources (and money) are spent training or adapting the model upfront. After that, however, the inference process is typically fast: the fine-tuned model simply generates the answers all at once, without the need for a database lookup.

In contrast, RAG defers the effort to runtime: each user query triggers a vector search and data loading into the prompt, adding latency and computational overhead to every request. RAG also requires search infrastructure to be maintained (incurring continuous cloud or hardware costs), whereas a fine-tuned model mostly just needs an API or server to host it. In summary, fine-tuning is more expensive upfront and RAG is more expensive per query. However, in many cases, fine-tuning can reduce ongoing costs by enabling the use of a smaller model.

For example, rather than running an expensive, large model for each query, you could fine-tune a smaller open-source model once and deploy it for cheaper inference. However, if your data or content changes often, the cost of frequent retraining can add up.

Hybrid approaches incur costs at both ends (training and retrieval), so the cost/latency analysis depends on how frequently you retrain and how complex your retrieval process is.

Generally, if low latency is paramount and the domain is static, fine-tuning is preferable (as there are no extra hops at runtime), but if you require current data and can tolerate slightly more latency, RAG is worthwhile.

Compliance & Traceability

This dimension is about controlling data and being able to explain or justify the outputs of AI systems, which is crucial for enterprise solutions in regulated industries.

RAG offers an advantage in this respect: since the responses are based on retrieved documents, it is often possible to provide citations or references to sources, thereby building trust and facilitating auditing processes.

For example, if a user asks, 'How do we handle data retention for EU customers?', a RAG-based assistant can quote the relevant GDPR policy text from your knowledge base, providing a clear source. In contrast, a fine-tuned model would simply answer from its internal memory without providing an obvious way to demonstrate the basis of its response

From a privacy perspective, RAG can also be configured so that all proprietary data remains in your controlled database and is not incorporated into the model weights (which could potentially be leaked if the model is probed). Oracle, for example, notes that RAG is often considered superior for data privacy because sensitive information remains in a secure, access-controlled database rather than being reproduced in the model.

However, fine-tuning requires feeding your proprietary data into the model training process. This may be done by a third party, but if not carefully managed, there is a risk that the model will memorise and regurgitate private details. Furthermore, if a regulation changes or a certain data point must be removed for compliance reasons, RAG enables you to simply delete or update the source document in the index. In contrast, a fine-tuned model would require a new training run to forget or correct that information.

In summary, RAG tends to be better for transparency and updatability, whereas fine-tuned models can be opaque and difficult to amend. A hybrid approach can provide source citations for new information via RAG; however, any content originating from the fine-tuned portion will still lack direct attribution.

Check out a related article:

Artificial Intelligence in a Nutshell: Types, Principles, and History

Scalability & Maintenance

Scalability can refer to both scaling up knowledge and extending it to new domains or use cases.

With RAG, expanding the knowledge base is straightforward: more documents can be added to the database or new data sources can be connected. The model can instantly take advantage of the expanded knowledge base without retraining, subject to context length limits. The main task in maintaining a RAG system is to maintain the data pipelines, ensuring that new or updated documents are indexed and irrelevant or outdated content is cleaned up. It may also be necessary to optimise the retrieval process as the amount of data grows.

Fine-tuned models are less flexible in this respect: the model's knowledge base does not increase until another fine-tuning job is run on new data, which, as discussed, is a non-trivial process. If you want to scale out to multiple domains or clients, fine-tuning may require training separate model variants for each one (since each variant incorporates its own dataset), which can result in an overwhelming maintenance burden.

RAG can handle multi-domain queries simply by switching the context or indexing multiple knowledge bases using the same core model. This multi-tenancy advantage is why many SaaS companies choose RAG over fine-tuning dozens of custom models to serve different customers’ data with one model.

Conversely, serving a fine-tuned model at scale is simpler architecturally, as it only requires load balancing of model inference, whereas a RAG system at scale must handle both model inference and high-throughput vector search operations. A hybrid approach meets both needs: you would periodically fine-tune new model versions (e.g. as you accumulate more data or improvements) and continuously maintain the knowledge index.

The good news is that adding documents (RAG) and retraining models (fine-tuning) can be done at different frequencies, giving you flexibility – for example, you could update documents weekly and retrain the model quarterly as needed.

Team Expertise & Project Maturity

Implementing fine-tuning versus RAG often demands different skill sets.

RAG tends to be more of a software engineering and data engineering challenge – integrating databases, building search indexes, API orchestration – and can often be achieved without deep machine learning expertise. Cloud providers even offer managed RAG tools (for instance, AWS’s managed RAG or Azure Cognitive Search) that let developers plug documents into an LLM system with relative ease.

Fine-tuning, in contrast, is an ML research/engineering exercise – you likely need data scientists or ML engineers who understand model training, hyperparameters, evaluation, and have access to GPU infrastructure. If your organization does not have that in-house, fine-tuning might have a steep learning curve or require external partners.

In terms of project timeline, spinning up a basic RAG prototype can be faster (since you avoid model training and can use an out-of-the-box model + your data) – for example, Amazon’s guidance suggests starting with RAG first for a QA chatbot, then only fine-tune if needed. Fine-tuning will extend your development timeline due to the data preparation and experimentation involved. As a rule of thumb, if you’re early in your AI journey (trying out an MVP), RAG may get you to a usable solution quicker with lower initial risk. Fine-tuning becomes more attractive once you have a clearer idea of the task, sufficient data, and the desire to optimize for performance or cost at scale.

And naturally, the hybrid approach is typically a second phase consideration – it requires a fairly mature team that has likely implemented one approach and is ready to layer the other on top.

The table below summarizes how the three approaches compare across these dimensions:

Now that we have a clear view of the trade-offs, let’s examine when you would choose each approach, starting with scenarios where RAG is the optimal first choice.

When RAG Is the Right First Choice

Choosing RAG as your initial strategy makes sense in use cases where knowledge is dynamic, broad, or hard to fully enumerate in training data. Here are some situations where RAG shines:

Use Cases Suited to RAG:

- Large or Rapidly Changing Knowledge Bases: If your application needs to draw on a wide range of information that updates frequently (e.g. an enterprise chatbot answering policy questions, or a news analysis tool), RAG is ideal. It can tap into the latest documents or records on demand without retraining. For example, financial services firm Morgan Stanley built an internal advisor assistant that uses GPT-4 with RAG to access tens of thousands of proprietary research documents, allowing advisors to get answers from the most up-to-date firm knowledge. They went from answering 7,000 predefined queries to “effectively any question” over a corpus of 100k documents once RAG was in place. Such flexibility is very hard to achieve with static fine-tuned models.

- Domain Agnostic or Heterogeneous Queries: If your AI needs to handle questions spanning many topics or data sources (as is common with enterprise AI solutions that span departments), RAG may be a more practical approach. You can maintain separate document sets and direct the retriever accordingly instead of trying to incorporate all domain knowledge into a single model. A great example is an internal IT helpdesk assistant – employees might ask about anything from VPN reset steps to vacation policy details. It is easier to store these answers in a knowledge base and retrieve them than to fine-tune a model to memorise all this information. A RAG-enabled HR/IT chatbot can fetch the exact information (e.g. the VPN reset procedure) from internal documents and present it verbatim to ensure accuracy.

- High Accuracy and Traceability Requirements: When you need accurate, verifiable answers, RAG provides reassurance. Since responses can include excerpts from approved documents, business leaders can be more confident that 'the AI is saying exactly what's in the manual' rather than relying on guesswork from models. This is particularly important in legal, compliance and medical applications. For example, a legal AI assistant using RAG could retrieve the relevant clause from a contract or statute to answer a query about regulations, enabling lawyers to verify the information. In regulated industries, having that traceability can be the deciding factor in whether an AI solution is acceptable.

Benefits of Starting with RAG:

RAG deployments tend to be fast to iterate and adjust. If users start asking new types of questions, you can enrich the knowledge base by adding new documents and improve the retrieval algorithms without touching the model. This agility is ideal for evolving products. Additionally, RAG can significantly reduce hallucinations. Since the LLM receives factual text as input, it is less likely to 'make up' answers that go beyond the information provided. Early user feedback can be easily incorporated by tweaking the sources retrieved or the way the prompt is constructed, rather than doing another training cycle.

Another benefit is data security: RAG enables sensitive data to remain on-premises or in your private cloud database, never leaving your controlled environment unless it is part of the prompt. Companies worried about sending data to external APIs or a model leaking confidential information can use RAG to keep sensitive content segregated and ensure it is only used when necessary. For example, you could ensure that the retrieval process only pulls documents that the querying user is authorised to view, thereby implementing fine-grained access control, which would not be possible if all data were incorporated into a model.

Risks and Best Practices:

The main risks associated with RAG are the engineering complexity involved and the potential for gaps in the knowledge base. If your document retrieval isn’t optimised effectively, the LLM may receive irrelevant or low-quality context, resulting in inaccurate responses. One common failure mode is when the system retrieves no relevant information, in which case the model may attempt to provide an answer without sufficient grounding, which can result in inaccuracies. To mitigate this, invest in a good vector embedding model and consider hybrid retrieval strategies, such as combining semantic and keyword searches or re-ranking results. It is also important to chunk your documents effectively (so that each chunk contains a self-contained piece of knowledge) and to store rich metadata that can be used to filter or enhance relevant information.

Maintaining the knowledge base incurs additional overheads, as you will need processes to regularly ingest new data (e.g. from a feed of your content management system or database) and archive outdated content. In terms of infrastructure, a production RAG system often involves additional components, such as a vector database service (e.g. Pinecone, Redis Search or FAISS), which must be scaled and monitored. This adds an operational burden compared to a simple API model.

One best practice is to start with a smaller pilot, perhaps by enabling RAG on one or two high-value document sets (such as your company policies, FAQs, or product documentation) to see improvements in answer quality. This can build confidence and justify further investment in expanding the knowledge base. Also, always test your RAG system with a variety of queries to ensure that it retrieves the correct information consistently. Sometimes the model will answer correctly if the relevant document is found, but you need to fine-tune the retrieval parameters to achieve this consistently.

Real-World Examples: Beyond the Morgan Stanley case (finance), many organizations in 2023 have adopted RAG for customer support and knowledge management. For example, SaaS companies like Notion and HubSpot reportedly use RAG-based bots to help users query documentation. Even OpenAI’s own ChatGPT plugin ecosystem is essentially RAG – fetching info from third-party services. Microsoft’s Bing Chat is another high-profile example: it uses web search (a form of retrieval) combined with GPT-4 to answer with current information and citations. These illustrate that when freshness and breadth of knowledge are needed, RAG is a go-to solution.

In summary, consider RAG first if your AI application’s value depends on large volumes of information or rapidly changing data. It offers a practical way to inject domain knowledge without heavy model tuning, and it keeps you flexible as your content evolves. Next, we’ll look at the flip side – scenarios where fine-tuning the model yields more value.

When Fine-Tuning Delivers More Value

In some situations, fine-tuning an LLM on your data will deliver better results than RAG. Generally, these are cases where the bottleneck is not raw knowledge, but the model’s behavior or specialization. Fine-tuning is the right choice when you need the model to deeply internalize patterns, generate outputs in a specific way, or handle tasks that aren’t just about retrieving facts.

Use Cases Suited to Fine-Tuning:

- Domain-Specific Expertise with Subtle Reasoning: If your application requires complex reasoning or nuanced understanding within a specialised domain, a fine-tuned model could be highly effective. For instance, a medical diagnosis assistant could be fine-tuned using thousands of patient case studies and clinical guidelines to help it 'think' like a doctor when presented with symptoms. The model learns latent associations (e.g. symptom combinations and probable illnesses) that are not explicitly written in any single textbook, which is something that RAG might struggle with unless the exact scenario is documented. Similarly, an engineering troubleshooting assistant could be fine-tuned using past incident reports to learn problem-solving approaches.

- Task-Specific Skills or Behaviors: Fine-tuning is often the only way to teach an LLM new skills or response formats. One example is instruction tuning, which involves training a model to follow a specific set of instructions or ask clarifying questions. As Gwen Shapira notes, if your support chatbot needs to proactively ask users for missing information, RAG won't help because the model lacks the training to exhibit that behaviour, not the data. Fine-tuning using dialogue examples where the assistant asks questions can instil this habit. Another example is code generation in a niche programming language: if the base model hasn’t been trained much on that language, providing a few examples in context may not be effective, but fine-tuning on a corpus of code can significantly improve the model’s proficiency. In essence, whenever you want the model to perform a task that is new or significantly different from its base capabilities, fine-tuning is the solution.

- Consistent Tone, Style, or Branding: Many enterprises are concerned about the tone of their AI-generated content. Fine-tuning is a highly effective way of aligning an LLM with a particular tone or style, as it allows you to train the model using examples of that style. For example, if you are a bank or law firm, you could fine-tune the model using company communications to ensure responses are formal and professional. If you’re developing creative writing AI for marketing purposes, you could fine-tune it using examples from your brand's previous campaigns to adopt the same style. Although prompt engineering can enforce certain style guidelines, achieving a consistent persona without fine-tuning is difficult – the model may deviate if not explicitly trained. OpenAI’s recent fine-tuning of GPT-3.5 is a case in point: organisations fine-tuned the model to produce outputs that matched their preferred tone and formatting, eliminating the need to add lengthy instructions to every prompt. Fine-tuning 'bakes in' those stylistic preferences.

- Low-Resource Languages or Modalities: If you need the model to understand a language or jargon that wasn’t covered well in the initial training, fine-tuning can help. For example, a company operating in Hebrew or Arabic, which are less common in public datasets, could fine-tune an LLM using bilingual data or texts specific to their field to improve fluency in these languages. Another possibility is multi-modal fine-tuning, which involves using a model that can accept other inputs, such as structured data or images. However, this goes beyond the scope of pure LLM text discussion.

Types of Fine-Tuning

It’s worth noting that “fine-tuning” is a broad term. Depending on your needs, you might choose:

- Full model fine-tuning: updating all parameters (rarely needed unless you have a very large dataset).

- LoRA or Adapter fine-tuning: adding small trainable adapter layers – popular for LLMs since it’s memory-efficient.

- Reward-based tuning (RLHF): if focusing on aligning model with human preferences, often done after supervised fine-tune.

- Continual pre-training (domain-adaptive pretraining): technically not supervised fine-tuning, but training the model further on raw domain text to absorb terminology (e.g. continuing to train on legal corpus to get a “Lawyer LLM”).

In the enterprise context, most will use either supervised fine-tuning or PEFT methods for cost efficiency. The good news is that many tools and platforms (Hugging Face Transformers, Azure Custom Text, AWS Sagemaker JumpStart, etc.) have made fine-tuning more accessible in 2024–2025. There are also emerging LLMOps frameworks to manage the fine-tuning lifecycle, from data versioning to experiment tracking.

Data Needs and MLOps: As stressed earlier, fine-tuning is data-hungry. Often, a significant limitation is not the algorithm itself, but rather the lack of a sufficiently large and clean training set for your use case. You may need to combine data from various sources, for example taking internal documents and augmenting them with publicly available data, or generating synthetic examples to cover edge cases. Some companies have even used GPT itself to generate additional training data, such as paraphrasing questions, to enlarge the dataset. However, this must be done carefully to avoid a 'garbage in, garbage out' situation.

Once you have the data, the process involves multiple stages: preprocessing text, possibly labeling it, splitting into train/validation sets, training with proper hyperparameters, and evaluating the fine-tuned model. Having a robust MLOps pipeline is crucial. You’ll want the ability to retrain easily when data is updated, to rollback if a fine-tune version underperforms, and to monitor the model’s outputs over time for drift or biases introduced. Many enterprises treat fine-tuned LLMs as new “models” that must go through the same QA and deployment process as any ML model – including bias testing and safety checks.

Model Selection Considerations: If you decide to fine-tune, a key question is which base model to start with. The options range from proprietary models, such as OpenAI's GPT via API, where fine-tuning is possible but limited, to open-source models, such as Meta's Llama and EleutherAI's models. Using open-source models gives you full control over fine-tuning and deployment on your own infrastructure and helps to avoid potential data-sharing issues. It may also be cheaper for large volumes in the long run. However, unless fine-tuned well, open models might underperform the very latest proprietary ones. Proprietary models fine-tuned via API (OpenAI, Cohere, etc.) can be simpler as they handle the training infrastructure, but your data is typically sent to the vendor’s cloud for training, which some companies prefer to avoid for privacy reasons. Also, not all vendors support fine-tuning their largest models (as of 2025, GPT-4 fine-tuning is still not widely available, but GPT-3.5 is). Therefore, there is sometimes a trade-off between model quality and customisability.

Check out a related article:

Generative AI Like ChatGPT: What Tech Leaders Need to Know

Benefits of Fine-Tuning

The benefits, when done right, are significant:

- You get higher accuracy on specialized tasks – the model is literally optimized for your domain. A fine-tuned model often beats a prompt-based approach in both quality and reliability for narrow tasks (as an example, a fine-tuned 8-billion-parameter model can outperform a generic 175B model on domain-specific queries at a fraction of the cost).

- You can achieve consistent outputs that match your desired format or policy. This reduces the need for post-processing or complex prompt engineering at runtime.

- In some cases, you can reduce overall costs by serving a fine-tuned smaller model instead of calling a giant model with a huge prompt. This is especially true if you plan to scale to many requests; the upfront train cost is amortized.

- Fine-tuning can also help with model bias and correctness on your specific data. For example, if the base model has a bias or a tendency to give a certain kind of response, fine-tuning on more representative data for your user base can mitigate that.

Risks and Challenges

Fine-tuning is not without its challenges. Overfitting is a risk – if your training examples are limited or too narrow, the model might simply memorize them and fail to generalize (leading to brittle performance). It requires careful validation.

Another challenge is that fine-tuning can sometimes degrade performance on things that were previously fine. The model might “forget” some of its broad knowledge or capabilities as it specializes (this is sometimes called catastrophic forgetting). For example, one Oracle blog quipped that a doctor who becomes highly specialized might lose some general touch – similarly a fine-tuned model can lose some of its general conversational ability. This is why mixing approaches or using multi-task training is considered (or just accept the trade-off and use separate models for separate tasks).

Also, once you have a fine-tuned model, it is your responsibility to update it. If a critical error is discovered — for example, if the model consistently provides the wrong answer to a particular query — you must add data and retrain it. This process requires more involved maintenance than RAG, where you might simply correct a document. Furthermore, fine-tuned models do not naturally explain their answers or provide sources, which can affect user trust, as previously mentioned.

MLOps & LLMOps Considerations: Deploying a fine-tuned model means that you now have a custom model ready to use. This involves setting up the necessary infrastructure (perhaps using a GPU or an accelerated inference server) and potentially monitoring the model to ensure it performs well in production. Many companies run a whole new set of A/B tests or evaluations for fine-tuned models to confirm that they genuinely outperform the baseline on target metrics. If the fine-tuning is done on an open model, you will also need to containerise and host the model, which requires significant DevOps effort (though solutions such as Nvidia’s Triton Inference Server and Hugging Face's text-generation-inference exist to assist with this).

Real-World Examples

- One well-known example of domain-specific fine-tuning is BloombergGPT, where Bloomberg fine-tuned (actually pre-trained and fine-tuned) a 50B parameter model on a huge corpus of financial data. The result was a model that significantly outperformed general LLMs on finance tasks (the Snorkel study mentioned earlier aligns with this theme).

- Another example: Code LLMs like OpenAI’s Codex or Meta’s Code Llama are effectively fine-tuned on code data – and they far exceed general models at programming tasks. - In the healthcare domain, researchers have fine-tuned models on medical transcripts to create doctor’s assistants that pass medical exams (e.g. Med-PaLM by Google is a fine-tuned variant of PaLM for medicine).

- On a smaller scale, companies are fine-tuning internal versions of Llama-2 for things like customer support chat. These don’t always make headlines, but it’s happening widely as tools become available.

If your use case aligns with needing deeply tailored output, new model behaviors, or highly specialized knowledge that isn’t all explicitly written down in documents, fine-tuning delivers more value. It’s an upfront investment that pays off in smoother, smarter interactions within that scope. Just be sure you have (or partner with) the necessary ML expertise to execute it well.

Hybrid Approaches: Combining RAG and Fine-Tuning

As we have seen, RAG and fine-tuning complement each other. It’s no surprise, then, that many teams in 2024–2025 are pursuing hybrid architectures that combine the two, with the aim of maximising accuracy and minimising hallucinations while staying up to date. While a hybrid approach can take different forms, the common theme is that both a knowledge retrieval component and a customised model are used together in the solution.

Pattern: Fine-Tuned Model + Retrieval at Runtime (RAFT)

We already introduced RAFT (Retrieval-Augmented Fine-Tuning) above. This is the most straightforward hybrid pattern and arguably the most powerful today. Here’s how it works in practice:

- You fine-tune an LLM on your core domain data or interactions. This gives it deep understanding of your context, terminology, and the style of responses you want.

- You deploy this model behind a RAG system, where at query time it is fed relevant documents from a vector database (just like a RAG pipeline) in addition to the user’s question.

- The fine-tuned model, being both domain-knowledgeable and context-aware, uses the retrieved info plus its own learned expertise to produce the answer.

It is expected that this model will make better use of retrieved evidence, since it was likely trained to follow instructions and integrate information during the fine-tuning process. Consequently, it is anticipated that it will produce more polished responses than a generic model with the same context. According to Oracle’s blog, the combination yields “highly accurate, relevant and context-aware outputs” that are nuanced and timely. In practice, fine-tuning can improve the way the model contextualises the retrieved data. For instance, a fine-tuned model may be more effective at referencing the data or drawing accurate conclusions from it, whereas a base model may occasionally misinterpret a snippet.

Pattern: Retrieval to Augment Fine-Tuning Data

Another hybrid pattern involves using retrieval techniques in the training loop. For example, some advanced workflows retrieve related documents to supplement each training example, enabling the model to learn to incorporate information from documents. While this is more of a research-based approach (there are papers on 'augmenting fine-tuning with retrieval'), it can help the model learn how to use a knowledge base. In a sense, you are simulating RAG during training.

Pattern: Multi-Stage Systems (Agents)

A broader view of hybrid systems involves using different models for different subtasks within an AI application. For example, you could have a system in which the first stage uses RAG to gather information, the second stage uses a fine-tuned model to analyse it and the third stage uses a model fine-tuned for formatting to write the answer. These multi-component pipelines essentially combine fine-tuned and retrieval components in a chain. One example could be a customer support workflow: first, relevant troubleshooting steps are retrieved; then, a fine-tuned model rephrases them as a friendly answer.

However, the more components there are, the greater the complexity. A fully hybrid approach should only be attempted when simpler architectures do not meet your requirements.

When to Use Hybrid

If you find yourself saying “We really need both – the model needs to be smarter and we need real-time data,” it’s a sign that a hybrid might be appropriate. Common triggers:

- After implementing RAG, you notice the model’s answers are factually correct but not well-structured or not in the desired tone. Fine-tuning the model could help it present the retrieved facts better.

- After fine-tuning a model, you realize it’s great on in-domain queries but still fails whenever there’s a question about something updated (e.g., “What’s the latest price of X?”). Adding RAG can supply those missing pieces without retraining the model constantly.

- The use case has a stable core (which can be in the model) and a dynamic periphery (which can be in external data). For example, an e-commerce assistant might have a fine-tuned model that knows all about the product catalog structure and typical Q&A, but it uses retrieval to get the inventory status or latest reviews.

One concrete scenario: Imagine an insurance company’s chatbot. The rules and coverage details rarely change (so you fine-tune a model on all policy documents and Q&A examples – it becomes an insurance expert). But the customer-specific info (their claims status, their personal data) is dynamic and private – you wouldn’t fine-tune the model on each customer’s data. Instead, you use RAG to fetch customer-specific details from a database at runtime. The fine-tuned model then merges its general insurance knowledge with the customer’s particular data for a personalized, accurate answer. This hybrid design is common in enterprise applications combining general intelligence with user-specific or context-specific data.

Complexity and Trade-offs

The complexity of hybrid systems is the highest. You need all the skills and resources for both approaches:

- A data engineering pipeline for documents.

- A training pipeline for the model.

- Serving infrastructure that can handle the model inference and the vector search efficiently, possibly even co-located to minimize latency.

One trade-off is that combining approaches might yield diminishing returns if not necessary. For instance, if your fine-tuned model already knows 95% of what users ask and the domain doesn’t change often, adding RAG might not justify the effort. Alternatively, if RAG already gives great answers and style/tone isn’t critical for your use case (maybe internal tool), fine-tuning might be overkill.

So while hybrid is powerful, it’s not always needed – many successful solutions use one method or the other in isolation to keep things simpler.

Best of Both, But Worst of Both

It’s jokingly said that a hybrid approach can be the best of both worlds or the worst of both worlds. To ensure it’s the former, only pursue a hybrid approach when you can clearly identify shortcomings in each individual approach that can be fixed. Plan for a rigorous testing phase to check that your fine-tuned model isn’t ignoring retrieval context (some models might rely too heavily on their internal knowledge if they are not trained to use retrieved information), and also to ensure that retrieval is still effective. You may need to iterate the prompt format when using a fine-tuned model plus RAG. For example, your prompt for the hybrid model could explicitly instruct: 'Use the provided information to answer, and default to it over your own knowledge', and so on. Some research suggests that fine-tuned models can be less accurate about what they know versus what they don’t know (because they have been fed information from a narrower domain), so you need to ensure that the hybrid model does not make incorrect assumptions beyond the information in the documents and think it is an expert. Techniques such as prompting the model to quote the source or adding a verification step for the retrieved information can help.

Emerging Trends: An emerging variant of hybrid is combining LLMs with structured knowledge (e.g., knowledge graphs). For example, a system might retrieve both vector-based text and query a knowledge graph or database for factual answers, then combine them. In 2024–2025, we see early adopters doing these multi-retrieval hybrids to reduce errors. Another trend is tool use (model uses external tools like calculators or search engines) which can be seen as an extension of RAG – indeed, some call it REACT (another hybrid of retrieval and reasoning). These are beyond our scope, but worth noting that hybrid can extend in many creative directions.

To sum up, hybrid approaches are powerful for mission-critical AI applications where you need maximal performance: think of an enterprise virtual assistant that must be both deeply knowledgeable and always up-to-date. If you have the resources and the need, combining fine-tuning with RAG can significantly reduce hallucinations and improve user satisfaction – at the cost of a more complex system.

Step-by-Step Decision Playbook

With all these factors to consider, how should you decide which approach to take for your own custom AI project? Here’s a step-by-step guide to help CTOs and product leaders choose the right path:

Step 1: Define the Primary Goal for Your AI Application. Does the main challenge lie in the fact that the AI needs access to a lot of proprietary knowledge? Or does the AI need to perform a specific task extremely well, such as writing code or drafting marketing copy in your style? Clarifying whether knowledge access or task expertise is the bigger driver will immediately indicate whether RAG or fine-tuning is more appropriate. If knowledge access is important (i.e. if you have a wealth of data that the AI must use), then RAG is the better option. If a highly specialised skill or style is important, consider fine-tuning.

Step 2: Assess How Dynamic Your Knowledge Is. Ask: 'Will the facts/content that my model needs to know change frequently?' If the answer is yes, for example if product details are updated weekly or if regulations evolve, then having a retrieval mechanism will be very valuable in the long term. However, if the content is relatively static or changes only infrequently, fine-tuning becomes a more viable option, since retraining will not be necessary very often. Internal policies that update annually, for instance, could be incorporated into a model via fine-tuning, whereas daily news or real-time data demand a RAG approach.

Step 3: Inventory the Data You Have Available. Do you already have a collection of documents (such as wikis, PDFs and knowledge bases) that cover your field? Or do you have a well-labelled dataset of examples, or the capacity to create one? If you have lots of text that is not in Q&A format, this lends itself more easily to RAG – you can index those texts. If you have many example question/answer pairs, or can generate them, this lends itself to fine-tuning. If you have both, you can do either or both. If you have neither, you will still need to gather data, but RAG can sometimes proceed with less. You could start by indexing a small set of core documents, gradually building a fine-tuning dataset from transcripts or logs as you go along.

Step 4: Consider Requirements for Output Quality and Traceability. Is it important for your use case that the AI’s answers come with sources or justification (for user trust or compliance)? If yes, that’s a strong vote for RAG (or at least hybrid) because fine-tuned answers won’t inherently cite sources. Also, think about error tolerance: if a hallucinated answer could be harmful or unacceptable, you either need RAG to ground answers or a heavily fine-tuned model with narrow scope that minimizes guesswork. Many enterprises start with RAG specifically to mitigate the risk of the model “making stuff up” in sensitive scenarios.

Step 5: Evaluate Latency and Throughput Needs. For certain applications, such as high-volume transactional systems or real-time assistants, adding a retrieval step could result in responses being too slow or resource-intensive. However, fine-tuned models can be optimised and distilled to achieve extremely fast speeds. If you require sub-second responses or will be handling thousands of queries per second, please enquire about the feasibility of RAG overhead. There are ways to cache results or optimise RAG, but this is a factor to consider. If moderate latency (e.g. 1–2 seconds) is acceptable and the query load is manageable, RAG is suitable. Also consider costs: would you prefer to spread the costs out or make a one-time training investment? If your budget cannot currently accommodate the upfront training cost, it might be easier to start with RAG using an existing model.

Step 6: Check Your Team’s Capabilities and Timeline. Do you have in-house machine learning engineers familiar with fine-tuning large models? If not, are you willing to hire or wait to acquire that expertise? If the answer is no and you need a solution quickly, RAG is generally simpler to implement with software engineers. If you do have a strong ML team or a partner, fine-tuning is on the table. Also, what’s your development timeline? If you need a prototype live in a month, fine-tuning a model (especially if data prep isn’t done) might be unrealistic – RAG could get you there faster by leveraging an existing model and your documents.

Step 7: Identify Any Extreme Requirements (Security, IP, Offline, etc.). If your application must run fully offline or on-premises (no external API calls), you might choose an open-source model and fine-tune it – RAG itself can be on-prem too, but if you were considering using something like OpenAI’s API for RAG, that might be off the table due to data residency. Alternatively, if your data is extremely sensitive and you don’t even trust it in model training, you might lean RAG so that data stays in a DB and not in model weights. For intellectual property reasons, some companies prefer owning a fine-tuned model vs. relying on a third-party LLM service at runtime. All these operational constraints can influence the choice.

Step 8: Consider a Phased Approach. This is crucial – the decision doesn’t have to be permanent. In many cases the best route is: start with RAG (quick to get something working with basic Q&A), then as you gather user interactions and more data, consider fine-tuning to improve quality, and ultimately move to a hybrid to tackle any remaining gaps. Or vice versa: start with a fine-tuned model if you already have one for a specific task, and later bolt on retrieval if users start asking things outside its training. Plan for iteration. It’s often wise to deliver a minimum viable product with the simpler approach, validate its value, then iterate with more complexity (like hybrid) if needed.

Step 9: Use Decision Checklists (like above) to Double-Check. It can be helpful to go through a quick scorecard after the above steps. For example: - Do we need real-time up-to-date info? (Yes → RAG or Hybrid) - Is domain expertise or a specific behavior critical? (Yes → Fine-tune or Hybrid) - Do we have lots of content but not labeled examples? (Yes → RAG first) - Do outputs need to show sources or quotes? (Yes → RAG or Hybrid) - Are runtime costs a big concern relative to training cost? (If calling an API per query is expensive, fine-tune a smaller model; if training cost is a barrier, use RAG to avoid it.) - Do we have strict latency/throughput requirements? (If yes, lean fine-tune for simplicity, or plan a very optimized RAG system) - Does our team have the expertise to train and deploy models? (If not, RAG is the path of least resistance for now.)

Answering these questions often makes the best choice clear. If there is a mix of needs, this suggests that a hybrid solution may eventually be required. However, remember that you can implement solutions sequentially: it’s valid to use one approach as a stepping stone to the other.

Finally, don’t forget to measure and iterate. Whichever approach you start with, collect feedback and performance data. If users are unhappy with the quality of the responses, analyse whether the issue is missing information (suggesting the need to add RAG or expand the KB) or an incorrect tone or format (suggesting the need for fine-tuning). This empirical mindset will guide your roadmap – you may decide to adopt an approach that you initially avoided once you have evidence that it is needed.

In essence, this playbook encourages you to weigh what the AI needs to know vs how it needs to act, consider the practical constraints, and choose an approach that addresses the most critical needs first. And always keep the option open to evolve the solution as you learn more.

Conclusion

The decision between retrieval-augmented generation, fine-tuning, or a hybrid approach is a strategic one that will shape the success of your custom AI initiative. There is no one-size-fits-all answer – the optimal architecture depends on your use case’s specific needs for knowledge, performance, and flexibility. As we’ve discussed:

Critically, this choice is not final or exclusive. In 2025 and beyond, many organizations treat the RAG vs fine-tune decision as an iterative journey. You might start with one method to get initial capability and user feedback, then evolve your solution by incorporating the other method as requirements grow. The best practice is to stay agile: monitor how your AI is performing on real data and be ready to adapt.

When making this architectural decision, consult stakeholders from both the business and technical sides. Consider user experience (e.g. do users need answers with sources, or do they expect a certain tone?), regulatory constraints (e.g. are we allowed to use certain data for model training, or only for a safeguarded database?), and long-term maintainability (e.g. can we sustain frequent model retraining, or would we prefer to update the data?). A well-chosen approach can save significant costs, whether by avoiding unnecessary training cycles or preventing costly mistakes caused by outdated answers.

Finally, remember that you don’t have to go it alone. Engaging experts, such as experienced ML operations professionals or an AI software development company like Intersog, can help you navigate these choices with confidence.

As you move forward with your generative AI applications, use the insights from this guide to inform your decisions. The technology will continue to advance, but the core principle remains: focus on delivering the best possible user experience with the minimum necessary complexity, and evolve thoughtfully.

Of course, we’ll continue to advance as well. This article was only Part 1 of our guide. Stay tuned and subscribe not to miss the follow-up.

Leave a Comment